VSI Curl Support

Posted in GDAL/OGR, Locality and Space, Software on October 4th, 2010 at 06:14:47In a conversation at FOSS4G, Schuyler and I sat down with Frank Warmardam to chat about the possibility of extending GDAL to be able to work more cleanly when talking to files over HTTP. After some brief consideration, he agreed to do some initial work on getting a libcurl-based VSIL wrapper built.

VSIL is an API inside of GDAL that essentially allows you to treat files which are accessed through different streaming protocols available as if they were normal files; it is used for support for accessing content inside zipped containers, and other similar data access patterns.

GDAL’s blocking strategy — that is, the knowledge of how to read sub-blocks of files in order to obtain the information it needs, rather than needing to read a larger part of the file — is designed to limit the amount of disk I/O that’s needed for rendering large rasters. A properly set up raster can limit the amount of data that needs to be read significantly, helping improve tile rendering time significantly. This type of access would also allow you to fetch metadata about remote images without the need to access an entire (possibly large) image.

As a result, we thought it might be possible to use HTTP-based access to images using this mechanism; for metadata access and other similar information over the web. Frank thought it was a reasonable idea, though he was concerned about performance. Upon returning from FOSS4G, Frank mentioned in #gdal that he was planning on writing such a thing, and Even popped up mentioning ‘Oh, right, I already wrote that, I just had it sitting around.’

When Schuyler dropped by yesterday, he mentioned that he hadn’t heard anything from Frank on the topic, but I knew that I’d seen something go by in SVN, and said so. We looked it up and found that the support had been checked into trunk, and we both sat down and built a version of GDAL locally with curl support — and were happy to find out that the /vsicurl/ driver works great!

Using the Range: header to do partial downloads, and parsing some directory listing style pages for READDIR support to find out what files are available, the libcurl VSIL support means that I can easily get the metadata about a 1.2GB TIF file with only 64kb of data transferred; with a properly overlaid file, I can pull a 200 by 200 overview of the same file while using only 800kb of data transfer.

People sometimes talk about “RESTful” services on the web, and I’ll admit that there’s a lot to that that I don’t really understand. I’ll admit that the tiff format is not designed to have HTTP ‘links’ to each pixel — but I think the fact that by fetching a small set of header information, GDAL is then able to find out where the metadata is, and request only that data, saving (in this case) more than a gigabyte of network bandwidth… that’s pretty frickin’ cool.

Many thanks to EvenR for his initial work on this, and to Frank for helping get it checked into GDAL.

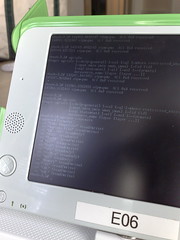

I’ll leave with the following demonstration — showing GDAL’s ability to grab an overview of a 22000px, 1.2GB tiff file in only 12 seconds over the internet:

$ time ./apps/gdal_translate -outsize 200 200 /vsicurl/http://haiticrisismap.org/data/processed/google/21/ov/22000px.tif 200.tif Input file size is 22586, 10000 0...10...20...30...40...50...60...70...80...90...100 - done. real 0m11.992s user 0m0.052s sys 0m0.128s

(Oh, and what does `time` say if you run it on localhost? From the HaitiCrisisMap server:

real 0m0.671s user 0m0.260s sys 0m0.048s

)

Of course, none of this compares as a real performance test, but to give an example of the comparison in performance for a single simple operation:

$ time ./apps/gdal_translate -outsize 2000 2000

/vsicurl/http://haiticrisismap.org/data/processed/google/21/ov/22000px.tif 2000.tif

Input file size is 22586, 10000

0...10...20...30...40...50...60...70...80...90...100 - done.

real 0m1.851s

user 0m0.556s

sys 0m0.272s

$ time ./apps/gdal_translate -outsize 2000 2000

/geo/haiti/data/processed/google/21/ov/22000px.tif 2000.tif

Input file size is 22586, 10000

0...10...20...30...40...50...60...70...80...90...100 - done.

real 0m1.452s

user 0m0.508s

sys 0m0.124s

That’s right, in this particular case, the difference between doing it via HTTP and doing it via the local filesystem is only .4s — less than 30% overhead, which is (in my personal opinion) pretty nice.

Sometimes, I love technology.